Source 1: The Moving Target Problem

Summary

Definition: Design situations, resources, and outcomes change more rapidly than designers’ understanding of them. Consequently, designers need to work without having significant experience with, and understanding of, design situations, resources, and outcomes. Waiting to obtain more knowledge and better understanding is risky as the issues may quickly become less relevant or obsolete.

Impacted Patterns: the force awakens, co-evolution of problem-solution, design-by-buzzword.

Description

The world surrounding a design activity is continuously changing. New materials, devices, computers and software products are continuously appearing, introducing new opportunities. Because these changes may happen rapidly, designers have limited time to create a useful design outcome. Design outcomes, especially those involving computing technologies1, have a limited lifespan, and may quickly become irrelevant or obsolete.

The constant and rapid change also means that designers who spend too much time on understanding a design situation and are hesitant to make decisions may end up creating a solution for an outdated problem. Myers, for example, described the problem that constant development of new technologies poses for the design of user interface tools (Myers et al., 2000). They argued that it is difficult to build tools without having significant experience with, and understanding of, the tasks they support. However, the rapid development of new interface technologies and techniques can make it difficult for tools to keep pace. By the time a new user interface implementation task is understood well enough to produce useful tools, the task may have become less critical, or even obsolete.

A similar note we may find by Harlan Mills, who wrote about difficulties of designing new programming techniques with high-pace of development of hardware (Glass 2006):

“It’s too bad that hardware grew so fast. You know, if we’d had these new processors … for 50 years at least, mankind could really have learned how to do sequential programming. But the fact is, by the time that [Edsger] Dijkstra comes out with structured programming, we’ve got all kinds of people using multiprocessors with interrupt handling, and there’s no theory behind it, but the IBM’s, the DEC’s, the CDCs, and so on, they are all driving forward and doing this even though nobody knows how to do it.” (page 241)

The importance of making timely decisions in software design was discussed by Richardson (2011). He claimed that a software architect could spend a great deal of time designing what he thinks is a perfect system, only to present it when it is irrelevant. To illustrate the challenges of design decisions in a changing environment, Richardson uses an analogy of a hurricane meteorologist:

“If you give a hurricane meteorologist a giant pile of data about a storm spinning in the middle of the Atlantic Ocean and ask her to determine exactly where it will come ashore, she can analyze the data, construct a detailed and accurate model of the atmospheric conditions and weather patterns, run some simulations, and come up with a forecast — two months after the storm hits. It’ll probably be wrong, but not by much, a moot point for the people in the storm’s path. The problem isn’t with the forecast’s accuracy but with the time needed to prepare it. … The model favored by hurricane meteorologists is to do just enough data acquisition and analysis to be reasonably certain what the storm will do and then start telling people to get ready.”

In addition to the high pace of external changes, constant changing of design outcomes may also create internal difficulties for designers. Changeability of software (e.g., see http://sebokwiki.org/wiki/The_Nature_of_Software, for instance, leads to continually evolving products. This evolution almost always comes with increased volume and internal complexity of such products. At some point, the sheer size and pace of growth of our systems may overpower our ability to understand them. Ebert and Jones (2009), for example, found out that premium cars in 2009 have 20 to 70 electronic control units with more than 100 million code instructions, totaling close to 1 Gbyte of software. Ebert and Jones also reported that similar embedded systems grow in size 10% to 20% per year. The larger the system, the more significant the number of both, its static elements (the discrete pieces of software, hardware) and its dynamic elements (the couplings and interactions among hardware, software, and users; connections to other systems). The increase of the number of system’s components increases the possibility of errors because at some point no one can completely understand all the interacting parts of the whole or can test them (Charette 2005). Or, as noted by Bob Colwell (2005) it is always possible to design something too complicated to get it right.

Constant change contributes significantly to the dynamics of design activities because it forces designers to work without extensive experience with and deep understanding of a design situation. The force awakens pattern, for instance, is often a consequence of rapid changes in the external world as a reaction to a design effort, as well as designer’s lack of understanding and experience with similar situations. The co-evolution of problem-solution pattern partially happens because, due to the fast pace of change, designers are dealing with new situations for which there is little previous projects and experiences. On the other hand, the design-by-buzzword pattern, may contribute to the constant change, as it aims at introducing new opportunities that may significantly change design situations.

Questions to Ask Yourself

- Are you working in a dynamic domain where things change quickly?

- How do you balance the need to spend more time understanding the situation and designing the outcome versus producing results rapidly?

- How do you deal with uncertainty in design situations?

- How do you react to change?

- How do you respond to significant unforeseen problems?

- Have you ever canceled you design effort due to significant changes in design situation or resources?

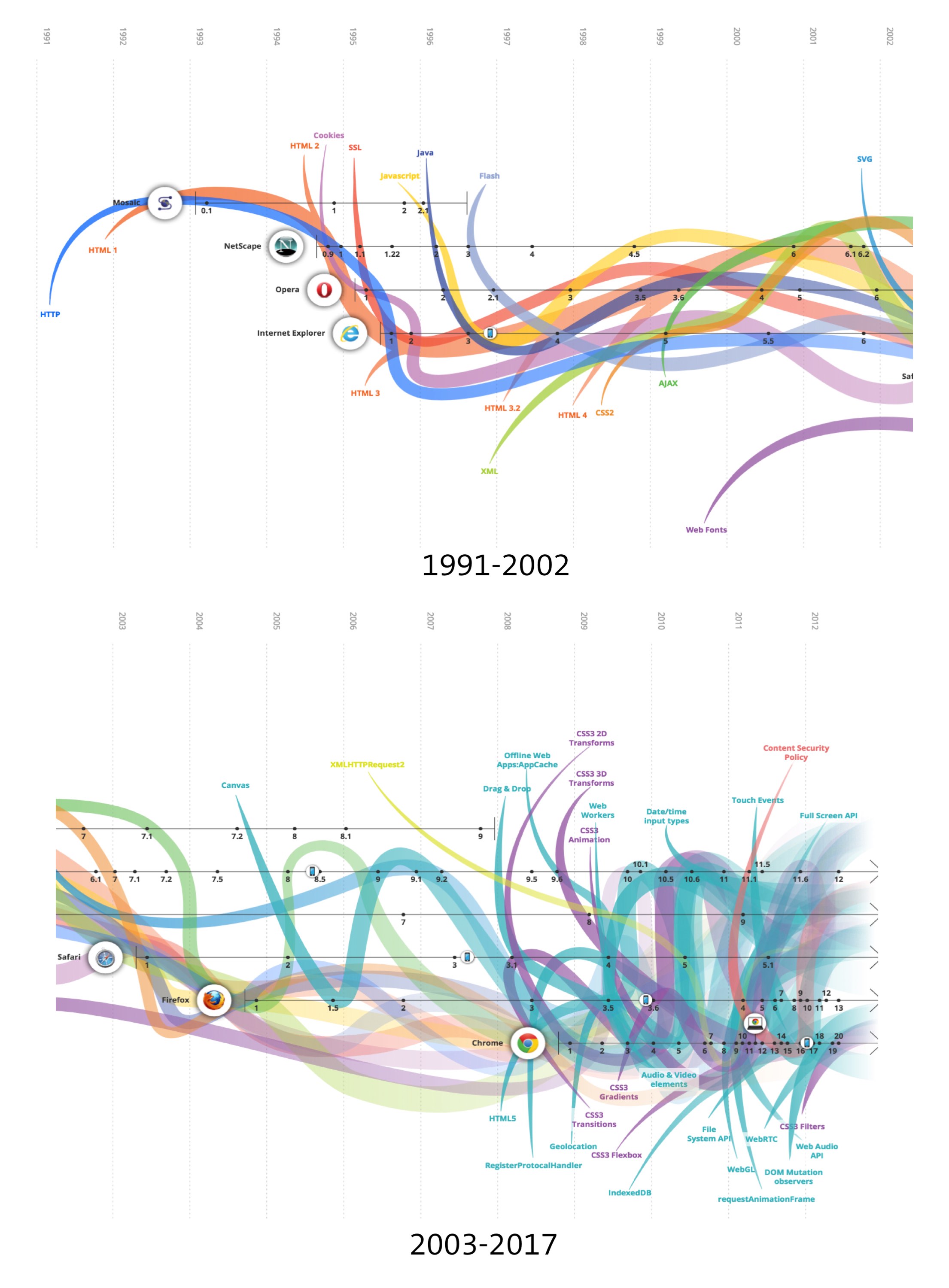

Cover Art

The snapshot from the http://www.evolutionoftheweb.com/ website. The website illustrates the constant evolution of existing web technologies, and frequent introduction of new web technologies.

Footnotes

-

See http://pages.experts-exchange.com/processing-power-compared/ for the effective visualization of the 1 trillion-fold increase in processing power from 1956 to 2015. ↩